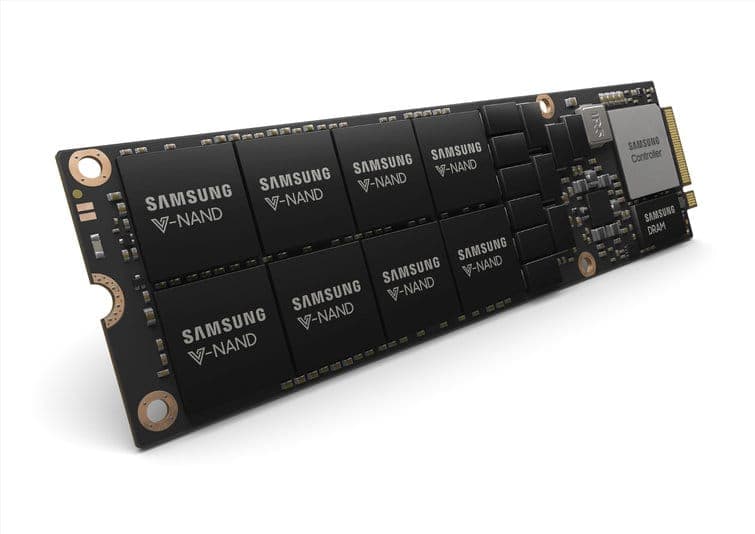

I started deep learning and I am serious about it : Start with a GTX 1060 (6GB) or a cheap GTX 1070 or GTX 1070 Ti if you can find one. I want to build a GPU cluster : This is really complicated, you can get some ideas here I am a researcher : RTX 2080 Ti or GTX 10XX -> RTX Titan - check the memory requirements of your current models I am a competitive computer vision researcher : GTX 2080 Ti upgrade to RTX Titan in 2019 I do Kaggle : GTX 1060 (6GB) for prototyping, AWS for final training use fastai library I have almost no money : GTX 1050 Ti (4GB) or CPU (prototyping) + AWS/TPU (training) I work with datasets > 250GB : RTX 2080 Ti or RTX 2080 I’ve trained multiple of these machines with 100% GPU utilization on all four GPUs for over a month without any issues or thermal throttling.Cost-efficient but expensive : RTX 2080, GTX 1080Ĭost-efficient and cheap : GTX 1070, GTX 1070 Ti, GTX 1060 I’m using Cuda 10.1 with TensorFlow (installed using conda) and PyTorch (installed using conda). The operating system I’m using is Ubuntu Server 18.04 LTS. The only differences are (1) they use a 12-core CPU instead of a 10-core CPU and (2) they include a hot swap drive bay ($50). This $7000 4-GPU rig is similar to Lambda’s $11,250 Lambda’s 4-GPU workstation. Comparison with Lambda’s 4-GPU Workstation Seagate BarraCuda ST3000DM0 RPM, $75 () 128GB RAM (more RAM reduces the GPU to disk bottleneck)Ĩ sticks of CORSAIR Vengeance 16GB DRAM, $640 () CPU Cooler (this cooler doesn’t block case airflow)Ĭorsair Hydro Series H100i PRO Low Noise, $130 () Left: The $7000 4-GPU rig | Right: The $6200 3-GPU rig from the post. Intel Core i9-9820X Skylake X 10-Core 3.3Ghz, $850 (03/21/19) X299 Motherboard (this motherboard fully supports 4 GPUs)ĪSUS WS X299 SAGE LGA 2066 Intel X299, $492.26 (03/21/19) Case (high airflow keeps the GPUs cool)Ĭorsair Carbide Series Air 540 ATX Case, $115 () 3TB Hard-drive (for data and models you don’t access regularly) HP EX920 M.2 1TB PCIe NVMe NAND SSD, $150 () 20-thread CPU (choose Intel over AMD for fast single thread speed) Rosewill HERCULES 1600W Gold PSU, $209 (03/21/19) 1TB m.2 SSD (for ultrafast data loading in deep learning) ZOTAC Gaming GeForce RTX 2080 Ti Blower 11GB, $1299 () Rosewill Hercules 1600W PSU (cheapest 1600W power supply) ASUS GeForce RTX 2080 Ti 11G Turbo Edition GD, $1209 ()Ģ. These 2-PCI-slot blower-style RTX 2080 TI GPU also will work:ġ. Here is each component: Ti GPUs (fastest GPU under $2000, likely for a few years) View my receipt for two of these 4-GPU rigs. Both NeweggBusiness and Amazon accept tax-exemption documents.

If you have a local MicroCenter store nearby, they often have cheap CPU prices if you purchase in a physical store.

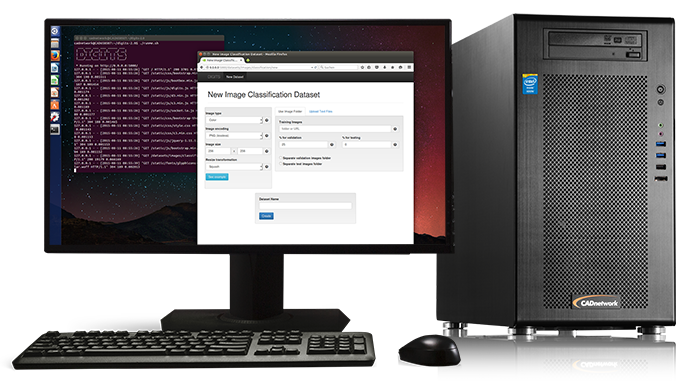

I ordered everything online via NeweggBusiness, but any vendor (e.g. I’ve included my receipt, showing the purchase of all the parts to build two of these rigs for $14000 ($7000 each). I built three variations of multi-GPU rigs and the one I present here provides the best performance and reliability, without thermal throttling, for the cheapest cost. Based on feedback that there were too many options in the previous post, I only list a best option for each component. It offers significantly higher performance compared to traditional workstations, by leveraging multiple graphical processing units (GPUs).

The goal of this post is to list exactly which parts to buy to build a state-of-the-art 4-GPU deep learning rig at the cheapest possible cost. A deep learning (DL) workstation is a dedicated computer or server that supports compute-intensive AI and deep learning workloads. Check out the previous post for component explanations, benchmarking, and additional options for this 4-GPU deep learning rig. In the previous post I stated, “there is no perfect build,” but if there was a perfect build at the lowest cost, what would it be? That’s what I show here. This is a good start toward making deep learning more accessible, but if you’d rather spend $7000 instead of $11,250+, here’s how. The post went viral on Reddit and in the weeks that followed Lambda reduced their 4-GPU workstation price around $1200. In a previous post, Build a Pro Deep Learning Workstation… for Half the Price, I shared every detail to buy parts and build a professional quality deep learning rig for nearly half the cost of pre-built rigs from companies like Lambda and Bizon.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed